(um) let’s make tech work for us…

The new autonomous systems labelled AI agents represent the latest evolution of AI technology and mark a new era in business. AI agents, in contrast to traditional models of AI that simply follow commands given to them and emit outputs in a specific format, work with a certain level of freedom. According to Google, these agents are capable of functioning on their own, without needing supervision from a human all of the time. The World Economic Forum describes them as systems that have sensors to see and effectors to interact with the environment. AI agents are expected to transform industries as they evolve from rigid, rule-based frameworks to sophisticated models adept at intricate decision-making . With unprecedented autonomy comes equally unprecedented responsibility. The additional benefits agentic AI technology brings is accompanied by unique challenges that invite careful consideration, planning, governance, and foresight.

The Mechanics of AI Agents: A Deeper Dive

Traditional AI tools, such as Generative AI (GenAI) or predictive analytics platforms, rely on predefined instructions or prompts to deliver results. In contrast, AI agents exhibit dynamic adaptability, responding to real-time data and executing multifaceted tasks with minimal oversight. Their functionality hinges on a trio of essential components:

- Foundational AI Model: At the heart of an AI agent lies a powerful large language model (LLM), such as GPT-4, LLama, or Gemini, which provides the computational intelligence needed for understanding and generating responses.

- Orchestration Layer: This layer serves as the agent’s “brain,” managing reasoning, planning, and task execution. It employs advanced frameworks like ReAct (Reasoning and Acting) or Chain-of-Thought prompting, enabling the agent to decompose complex problems into logical steps, evaluate outcomes, and adjust strategies dynamically—mimicking human problem-solving processes.

- External Interaction Tools: These tools empower agents to engage with the outside world, bridging the gap between digital intelligence and practical application. They include:

- Extensions: Enable direct interaction with APIs and services, allowing agents to retrieve live data (e.g., weather updates or stock prices) or perform actions like sending emails.

- Functions: Offer a structured mechanism for agents to propose actions executed on the client side, giving developers fine-tuned control over outputs.

- Data Stores: Provide access to current, external information beyond the agent’s initial training dataset, enhancing decision-making accuracy.

This architecture transforms AI agents into versatile systems capable of navigating real-world complexities with remarkable autonomy.

Multi-Agent Systems: The Newest Frontier

The Multi-Agent System (MAS) market is in for tremendous growth – Mckinsey gold, with a staggering predicted growth rate of nearly 28% by 2030. Recently Bloomberg predicted AI breakthroughs will soon give rise to multi-agent systems, collaborative networks of AI agents working collaboratively towards ambitious objectives. These systems promise scalability, surpassing the benefits of single operating agents.

Artificial imagination translates to a smart city. Imagine multiple AI agents working alongside each other. One controlling the signals, another managing traffic directing units, and an extra aiding with alert responder rerouting. And all of this is happening in real time!

Governance is key here to prevent a systemic failure, restricting conflicting commands that can stipulate paralysis or possibly force dysfunctions.Guaranteeing multi-agent systems possibility should be able to provide semi-standardized freedom, however, the benefits are the only ensured protocols alongside need need to be prescribed to.

Opportunities and Challenges

The potential of AI agents is game changing, but their independence creates grave concerns. The World Economic Forum highlights some challenges that companies must deal with:

- Risks Associated with Autonomy: Ensuring safety and reliability becomes all the more difficult as agents become more independent. For example, an unmonitored agent could execute resource allocation that would trigger operational failures with cascading effects.

- Lack of Accountability: As trust is already fragile due to the opaque reasoning of ‘black box’ behavior, it becomes even more crucial within high risk healthcare or finance situations. Ensuring transparency and accountability becomes non negotiable.

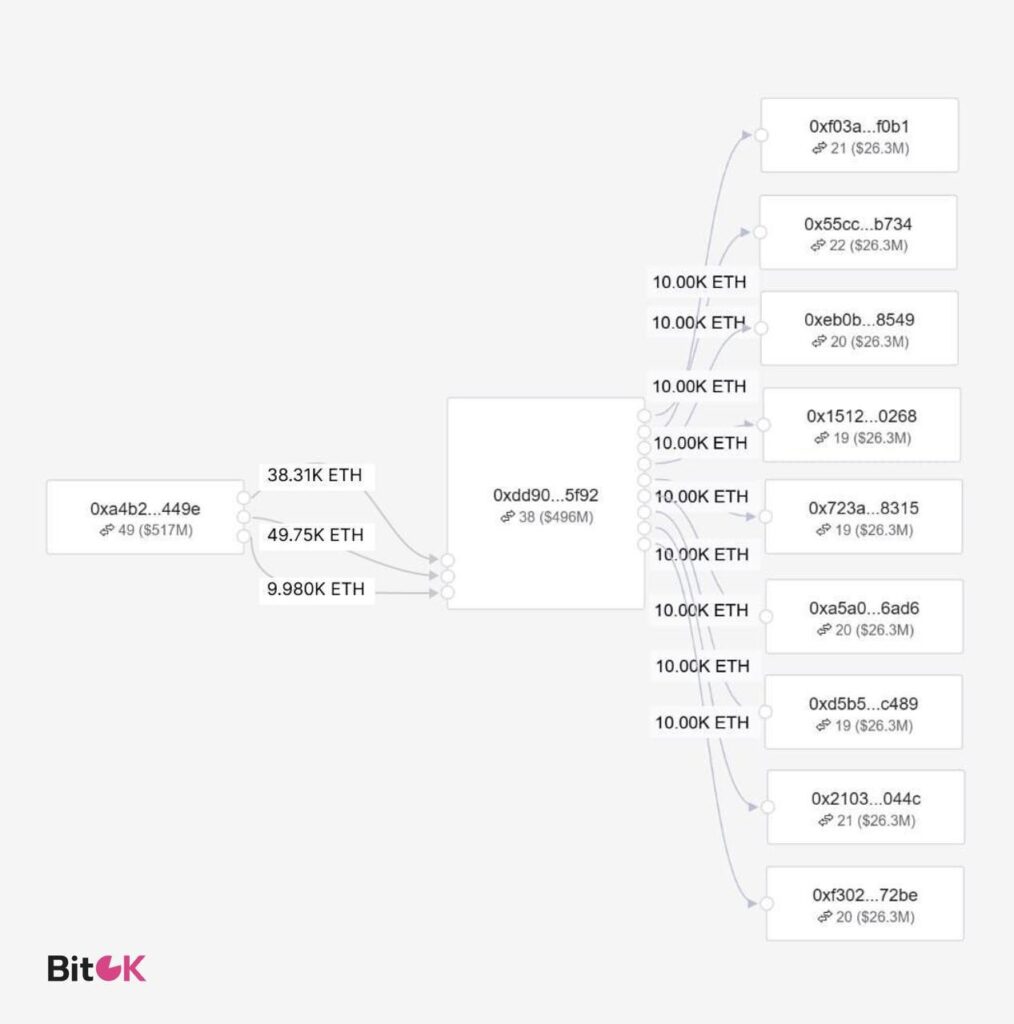

- Risks Surrounding Privacy and Security: A lot of sensitive information puts trust in jeopardy. An agent functioning effectively only having access to a multitude of sensitive systems and datasets makes one pose the question ‘How do we grant sufficient permissions without compromising security?’ Strong policies are needed to enforce standards to protect sensitivity and privacy while preventing breaches.

Some of these risks require guarding by taking proactive measures like consistent monitoring, adhering to ethical AI principles, human-in-the-loop oversight for garnering vital AI decision-making frameworks to retain control. Organizations need to deploy auditing tools that monitor and alter agent paths during deviations to regain control and maintain organizational goals.

The Human-AI Partnership

Even though AI agents have an independent function, their purpose is not to replace human reasoning but rather to augment it. How the EU AI Act works reminds us of the necessity of human intervention in processes like security or legal compliance which are sensitive. The best situation is one where both humans and machines work together: agents perform the monotonous and repetitive work that requires processing large amounts of data—this enables humans to be more strategic, creative, and ethical.

In a logistics company, for instance, an AI agent may be able to optimize the delivery routes using traffic information autonomously, and a manager can use their judgment and approve the AI’s plan using customer preferences or other unforeseen factors. This enables human control and supervision to be maintained while efficiency is also enhanced.

Guidelines for Implementing Agentic AI Strategically

Both Google Analytics and the World Economic Forum are integrated around a central idea. The responsible use of AI agents can result in outstanding value creation and unparalleled innovation. To reap value with manageable risks, businesses need to employ the following practices:

- Develop Skills: Prepare workforce on the building, implementation, and administration of AI agents to ensure the effective application of AI technology.

- AI Ethics: Develop appropriate business governance frameworks that adhere to the international benchmarks, the EU AI Act for instance, requiring fair and accountable operations of the agents.

- Ethics Boundaries: Delegated agent discretion must come with boundary safeguards to eliminate boundary overreach or lateral decision making through establishing unique controls.

- Validation Check: Enable behavioral modification to organizational needs through active auditing of the agent, stress testing em, and refining organizational value objectives.

Final thoughts

The integration of reasoning and planning gives Agent AI the ability to act on its own, AI agents mark a pivotal leap in the evolution of artificial intelligence. Their potential to change industries like personalized healthcare or smart cities is phenomenal but using AI carelessly is a grave mistake. For AI programs to be dependable companions, trust and security must anchor their development.

Organizations that find the right balance to enable agents to innovate while maintaining human supervision would be the ones leading the charge in this technological revolution. Agentic AI transforms it from an ordinary business tool to a paradigm shift re-imagining autonomy. Such a future is bound to belong to those who embrace its potential with clarity and caution.